Problem:

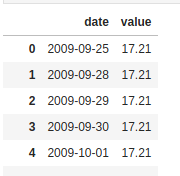

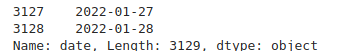

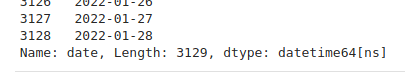

You are trying to plot a pandas DataFrame or Series using code such as

df.plot()

but you see an error message like

Traceback (most recent call last):

...

File "/usr/local/lib/python3.11/site-packages/matplotlib/figure.py", line 3390, in savefig

self.canvas.print_figure(fname, **kwargs)

File "/usr/local/lib/python3.11/site-packages/matplotlib/backend_bases.py", line 2164, in print_figure

self.figure.draw(renderer)

File "/usr/local/lib/python3.11/site-packages/matplotlib/artist.py", line 95, in draw_wrapper

result = draw(artist, renderer, *args, **kwargs)

^^^^^^^^^^^^^^^^^^^^^^^^^^^^^^^^^^^^^^^

File "/usr/local/lib/python3.11/site-packages/matplotlib/artist.py", line 72, in draw_wrapper

return draw(artist, renderer)

^^^^^^^^^^^^^^^^^^^^^^

File "/usr/local/lib/python3.11/site-packages/matplotlib/figure.py", line 3154, in draw

mimage._draw_list_compositing_images(

File "/usr/local/lib/python3.11/site-packages/matplotlib/image.py", line 132, in _draw_list_compositing_images

a.draw(renderer)

File "/usr/local/lib/python3.11/site-packages/matplotlib/artist.py", line 72, in draw_wrapper

return draw(artist, renderer)

^^^^^^^^^^^^^^^^^^^^^^

File "/usr/local/lib/python3.11/site-packages/matplotlib/axes/_base.py", line 3034, in draw

self._update_title_position(renderer)

File "/usr/local/lib/python3.11/site-packages/matplotlib/axes/_base.py", line 2969, in _update_title_position

bb = ax.xaxis.get_tightbbox(renderer)

File "/usr/local/lib/python3.11/site-packages/matplotlib/axis.py", line 1334, in get_tightbbox

ticks_to_draw = self._update_ticks()

File "/usr/local/lib/python3.11/site-packages/matplotlib/axis.py", line 1276, in _update_ticks

major_labels = self.major.formatter.format_ticks(major_locs)

File "/usr/local/lib/python3.11/site-packages/matplotlib/ticker.py", line 216, in format_ticks

return [self(value, i) for i, value in enumerate(values)]

File "/usr/local/lib/python3.11/site-packages/matplotlib/ticker.py", line 216, in <listcomp>

return [self(value, i) for i, value in enumerate(values)]

File "/usr/local/lib/python3.11/site-packages/matplotlib/dates.py", line 649, in __call__

result = num2date(x, self.tz).strftime(self.fmt)

File "/usr/local/lib/python3.11/site-packages/matplotlib/dates.py", line 543, in num2date

return _from_ordinalf_np_vectorized(x, tz).tolist()

File "/usr/local/lib/python3.11/site-packages/numpy/lib/function_base.py", line 2372, in __call__

return self._call_as_normal(*args, **kwargs)

File "/usr/local/lib/python3.11/site-packages/numpy/lib/function_base.py", line 2365, in _call_as_normal

return self._vectorize_call(func=func, args=vargs)

File "/usr/local/lib/python3.11/site-packages/numpy/lib/function_base.py", line 2455, in _vectorize_call

outputs = ufunc(*inputs)

File "/usr/local/lib/python3.11/site-packages/matplotlib/dates.py", line 362, in _from_ordinalf

raise ValueError(f'Date ordinal {x} converts to {dt} (using

Solution:

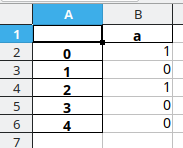

This issue has been discussed on the Matplotlib Github and also pandas Github. This issue occurs if you use df.plot() but then set custom formatting options for the x axis, for datetime index DataFrames.

Right now you can work around this bug by manually plotting the data:

for column in df.columns:

plt.plot(df.index.values, df[column].values, label=column)instead, but keep in mind that you might need to set some matplotlib options yourself (which pandas would otherwise set automatically) when plotting everything manually.