ng build --prod --aot --build-optimizer --common-chunk --vendor-chunk --named-chunks

While it will consume quite some CPU and RAM during the build, it will produce a highly efficient compiled output.

ng build --prod --aot --build-optimizer --common-chunk --vendor-chunk --named-chunks

While it will consume quite some CPU and RAM during the build, it will produce a highly efficient compiled output.

My .jitsi-meet-cfg/jigasi/sip-communicator.properties is getting overwritten every time I start Jigasi, but I need to set

net.java.sip.communicator.impl.protocol.sip.acc1.AUTHORIZATION_NAME=abc123abc

in order for my SIP communication to work.

Run this script after starting the jigasi container. It will fix the overwritten config and then restart the Jigasi Java process without restarting the container

#!/bin/sh sed -i -e "s/# SIP account/net.java.sip.communicator.impl.protocol.sip.acc1.AUTHORIZATION_NAME=abc123abc/g" .jitsi-meet-cfg/jigasi/sip-communicator.properties # Reload config hack docker-compose -f docker-compose.yml -f jigasi.yml exec jigasi /bin/bash -c 'kill $(pidof java)'

Original source: This GitHub ticket which provides a similar solution for a similar problem

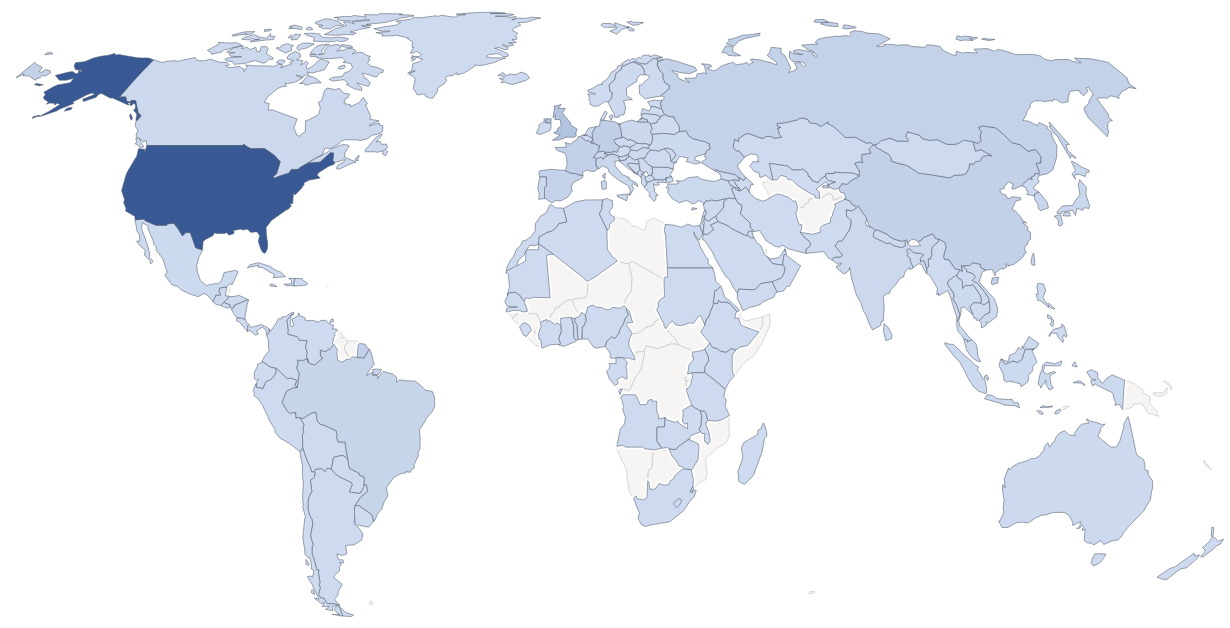

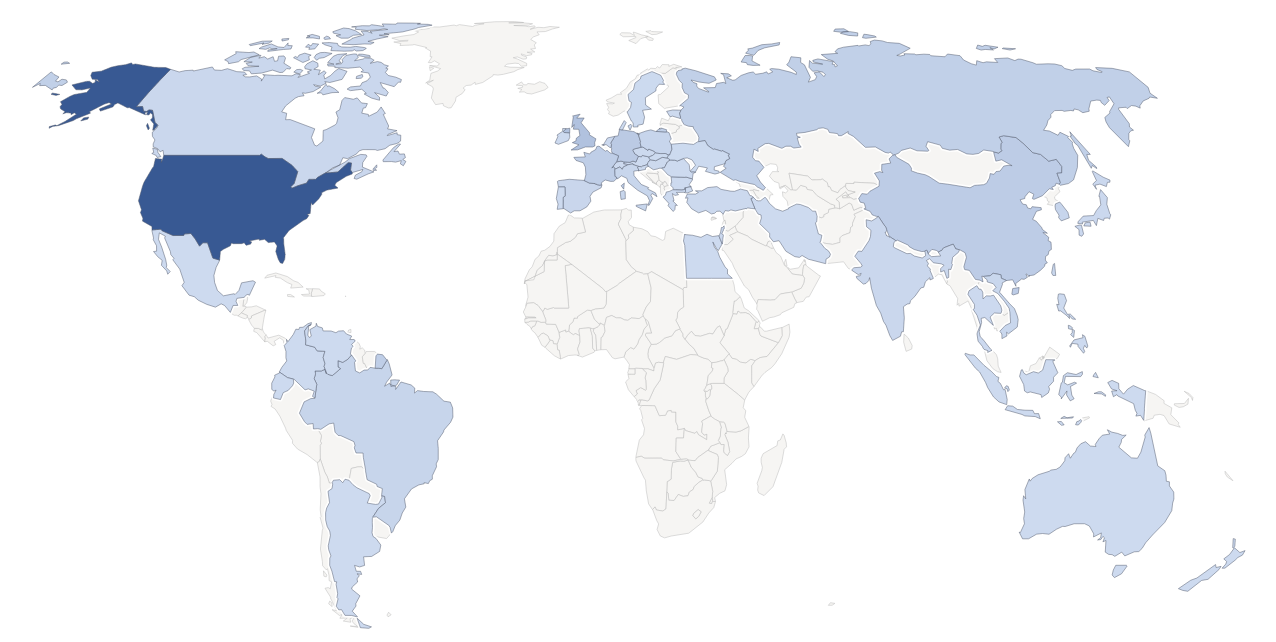

Since 2012, TechOverflow is providing technology solutions in a variety of fields to the world. Since then, we have reached up to 7500 visitors on a single day and reached multiple millions of technology.

Come what may, the next 8 years will be awesome, with new solutions, more topics, more languages and videos!

Visitor map in 2020

A good start is to use -P 4 -n 1 to run 4 processes in parallel (-P 4), but give each instance of the command to be run just one argument (-n 1)

These are the xargs options for parallel use from the xargs manpage:

-P, --max-procs=MAX-PROCS run at most MAX-PROCS processes at a time -n, --max-args=MAX-ARGS use at most MAX-ARGS arguments per command line

Example:

cat urls.txt | xargs -P 4 -n 1 wget

This command will run up to 4 wget processes in parallel until each of the URLs in urls.txt has been downloaded. These processes would be run in parallel

wget [URL #1] wget [URL #2] wget [URL #3] wget [URL #4]

If you would use -P 4 -n 2 these processes would be run in parallel:

wget [URL #1] [URL #2] wget [URL #3] [URL #4] wget [URL #5] [URL #6] wget [URL #7] [URL #8]

Using a higher value for -n might slightly increase the efficiency since fewer processes need to be initialized, but it might not work with some commands if you pass multiple arguments.

You can use a regular expression to grep for href="..." attributes in a HTML like this:

grep -oP "(HREF|href)=\"\K.+?(?=\")"

grep is operated with -o (only print match, this is required to get extra features like lookahead assertions) and -P (use Perl regular expression engine). The regular expression is basically

href=".*"

where the .+ is used in non-greedy mode (.+?):

href=".+?"

This will give us hits like

href="/files/image.png"

Since we only want the content of the quotes (") and not the href="..." part, we can use positive lookbehind assertions (\K) to remove the href part:

href=\"\K.+?\"

but we also want to get rid of the closing double quote. In order to do this, we can use positive lookahead assertions ((?=\")):

href=\"\K.+?(?=\")

Now we want to match both href and HREF to get some case insensitivity:

(href|HREF)=\"\K.+?(?=\")

Often we want to specifically match one file type. For example, we could match only .png:

(href|HREF)=\"\K.+?\.png(?=\")

In order to reduce falsely too long matches on some pages, we want to use [^\"]+? instead of .+?:

(href|HREF)=\"\K[^\"]+?\.png(?=\")

This disallows matches containing ” characters, hence preventing more than the tag being matched.

Usage example:

wget -qO- https://nasagrace.unl.edu/data/NASApublication/maps/ | grep -oP "(href|HREF)=\"\K[^\"]+?\.png(?=\")"

Output:

/data/NASApublication/maps/GRACE_SFSM_20201026.png [...]

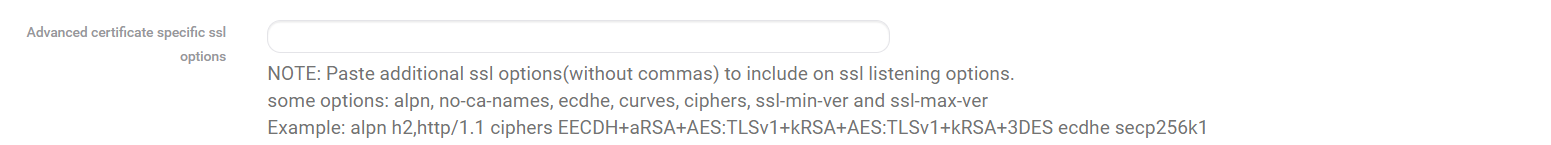

Go to the HAProxy frontend settings. For each individual frontend (not just the primary frontend), scroll down or search for alpn using the on-page search. You should see:

Paste or append this content there:

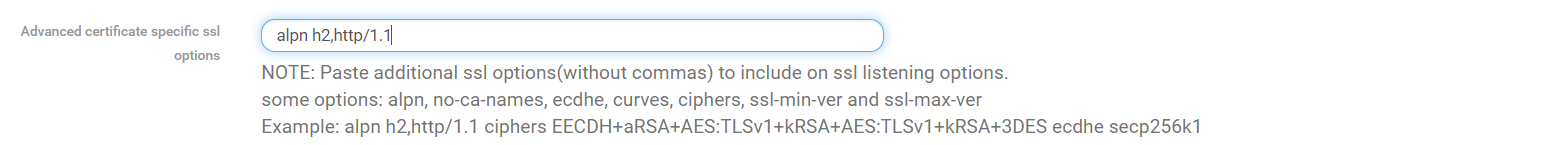

alpn h2,http/1.1

It should now look this this:

Now Save the settings and reload HAProxy.

After you reload the pages for which you just activated HTTP/2 using Ctrl+F5, you should have a HTTP2 connection.

This systemd unit file autostarts your NodeJS service using npm start. Place it in /etc/systemd/system/myapplication.service (replace myapplication by the name of your application!)

[Unit] Description=My application [Service] Type=simple Restart=always User=nobody Group=nobody WorkingDirectory=/opt/myapplication ExecStart=/usr/bin/npm start [Install] WantedBy=multi-user.target

Replace:

/opt/myapplication by the directory of your application (where package.json is located)User=nobody and Group=nobody by the user and group you want to run the service asDescription=My applicationThen enable start at boot & start right now: (Remember to replace myapplication by the name of the service file you chose!)

sudo systemctl enable --now myapplication

Start by

sudo systemctl start myapplication

Restart by

sudo systemctl restart myapplication

Stop by

sudo systemctl stop myapplication

View & follow logs:

sudo journalctl -xfu myapplication

View logs in less:

sudo journalctl -xu myapplication

Create /var/lib/mongodb/docker-compose.yml:

version: '3.1'

services:

mongo:

image: mongo

volumes:

- ./mongodb_data:/data/db

ports:

- 27017:27017This will store the MongoDB data in /var/lib/mongodb/data. I prefer this variant to using docker volumes since this method keeps all MongoDB-related data in the same directory.

Then create a systemd service using

curl -fsSL https://techoverflow.net/scripts/create-docker-compose-service.sh | sudo bash /dev/stdin

See our post on how to Create a systemd service for your docker-compose project in 10 seconds for more details on this method.

You can access MongoDB at localhost:27017! It will autostart after boot

Restart by

sudo systemctl restart mongodb

Stop by

sudo systemctl stop mongodb

View logs:

sudo journalctl -xfu mongodb

View logs in less:

sudo journalctl -xu mongodb

You have mounted a shared directory on your Synology NAS using NFS. The mount succeds, but when you try to access the mount point (e.g. ls /nas) you see a Permission denied error even if running as root.

Go into the Synology NAS web UI, go into control panel, go to shared folder edit the permissions for the shared folder you’re trying to access (right click => edit)

You likely have checked the No access checkbox for the admin user. Uncheck it, then click OK on the bottom right.

Now your NFS share should work again (even without remounting).

Warning: Depending on your application, disabling the SELinux security layer might be a bad idea since it might introduce new security risks especially if the container system has security issues.

In order to disable SELinux on Fedora CoreOS, run this:

sudo sed -i -e 's/SELINUX=/SELINUX=disabled #/g' /etc/selinux/config sudo systemctl reboot

Note that this will reboot your system in order for the changes to take effect.

The sed command will replace the default

SELINUX=enforcing

in /etc/selinux/config to

SELINUX=disabled

You can enable SSH in GRML using the ssh boot option. But if you have already started grub you can enable SSH using

Start ssh

Also remember to set a root password:

passwd

Run

mdadm --assemble --scan

and you’ll see all your MD devices.

This is the Ignition config that I use to bring up my Fedora CoreOS instance on a VM on my XCP-NG server:

{

"ignition": {

"version": "3.2.0"

},

"passwd": {

"users": [

{

"groups": [

"sudo",

"docker"

],

"name": "uli",

"sshAuthorizedKeys": [

"ssh-rsa AAAAB3NzaC1yc2EAAAADAQABAAAAgQDpvDSxIwnyMCFtIPRQmPUV6hh9lBJUR0Yo7ki+0Vxs+kcCHGjtcgDzcaHginj1zvy7nGwmcuGi5w83eKoANjK5CzpFT4vJeiXqtGllh0w+B5s6tbSsD0Wv3SC9Xc4NihjVjLU5gEyYmfs/sTpiow225Al9UVYeg1SzFr1I3oSSuw== sample@host"

]

}

]

},

"storage": {

"files": [

{

"path": "/etc/hostname",

"contents": {

"source": "data:,coreos-test%0A"

},

"mode": 420

},

{

"path": "/etc/profile.d/systemd-pager.sh",

"contents": {

"source": "data:,%23%20Tell%20systemd%20to%20not%20use%20a%20pager%20when%20printing%20information%0Aexport%20SYSTEMD_PAGER%3Dcat%0A"

},

"mode": 420

},

{

"path": "/etc/sysctl.d/20-silence-audit.conf",

"contents": {

"source": "data:,%23%20Raise%20console%20message%20logging%20level%20from%20DEBUG%20(7)%20to%20WARNING%20(4)%0A%23%20to%20hide%20audit%20messages%20from%20the%20interactive%20console%0Akernel.printk%3D4"

},

"mode": 420

}

]

},

"systemd": {

"units": [

{

"enabled": true,

"name": "docker.service"

},

{

"enabled": true,

"name": "containerd.service"

},

{

"dropins": [

{

"contents": "[Service]\n# Override Execstart in main unit\nExecStart=\n# Add new Execstart with `-` prefix to ignore failure\nExecStart=-/usr/sbin/agetty --autologin core --noclear %I $TERM\nTTYVTDisallocate=no\n",

"name": "autologin-core.conf"

}

],

"name": "serial-getty@ttyS0.service"

}

]

}

}

Which is build from this YAML:

variant: fcos

version: 1.2.0

passwd:

users:

- name: uli

groups:

- "sudo"

- "docker"

ssh_authorized_keys:

- "ssh-rsa AAAAB3NzaC1yc2EAAAADAQABAAAAgQDpvDSxIwnyMCFtIPRQmPUV6hh9lBJUR0Yo7ki+0Vxs+kcCHGjtcgDzcaHginj1zvy7nGwmcuGi5w83eKoANjK5CzpFT4vJeiXqtGllh0w+B5s6tbSsD0Wv3SC9Xc4NihjVjLU5gEyYmfs/sTpiow225Al9UVYeg1SzFr1I3oSSuw== sample@computer"

systemd:

units:

- name: docker.service

enabled: true

- name: containerd.service

enabled: true

- name: serial-getty@ttyS0.service

dropins:

- name: autologin-core.conf

contents: |

[Service]

# Override Execstart in main unit

ExecStart=

# Add new Execstart with `-` prefix to ignore failure

ExecStart=-/usr/sbin/agetty --autologin core --noclear %I $TERM

TTYVTDisallocate=no

storage:

files:

- path: /etc/hostname

mode: 0644

contents:

inline: |

coreos-test

- path: /etc/profile.d/systemd-pager.sh

mode: 0644

contents:

inline: |

# Tell systemd to not use a pager when printing information

export SYSTEMD_PAGER=cat

- path: /etc/sysctl.d/20-silence-audit.conf

mode: 0644

contents:

inline: |

# Raise console message logging level from DEBUG (7) to WARNING (4)

# to hide audit messages from the interactive console

kernel.printk=4using

fcct --pretty --strict ignition.yml --output ignition.ign

or TechOverflow’s online transpiler tool.

Install using:

sudo coreos-installer install /dev/xvda --copy-network --ignition-url https://mydomain.com/ignition.ign

Features:

Important note: While installing the Xen utilities using the CD ISO still works, it is outdated and you should prefer installing it using the rpm package. See our post Fedora CoreOS: How to install Xen/XCP-NG guest utilities using rpm-ostree

First, insert the guest-tools.iso supplied with XCP-NG into the DVD drive of the virtual machine.

Then run this sequence of commands to install. Note that this will reboot the CoreOS instance!

curl -fsSL https://techoverflow.net/scripts/install-xenutils-coreos.sh | sudo bash /dev/stdin

This will run the following script:

sudo mount -o ro /dev/sr0 /mnt sudo rpm-ostree install /mnt/Linux/*.x86_64.rpm sudo cp -f /mnt/Linux/xen-vcpu-hotplug.rules /etc/udev/rules.d/ sudo cp -f /mnt/Linux/xe-linux-distribution.service /etc/systemd/system/ sudo sed 's/share\/oem\/xs/sbin/g' -i /etc/systemd/system/xe-linux-distribution.service sudo systemctl daemon-reload sudo systemctl enable /etc/systemd/system/xe-linux-distribution.service sudo umount /mnt sudo systemctl reboot

After rebooting the VM, XCP-NG should detect the management agent.

Based on work by steniofilho on the Fedora Forum.

Please eject the guest tools medium from the machine after the reboot! Sometimes unneccessarily mounted media cause issues.

In order to list VMs on the command line, login to XCP-NG using SSH and run this command:

xe vm-list

Example output:

[16:51 virt01-xcpng ~]# xe vm-list

uuid ( RO) : 56dc99f2-c617-f7a9-5712-a4c9df54229a

name-label ( RW): VM 1

power-state ( RO): running

uuid ( RO) : 268d56ab-9672-0f45-69ae-efbc88380b21

name-label ( RW): VM2

power-state ( RO): running

uuid ( RO) : 9b1a771f-fb84-8108-8e01-6dac0f957b19

name-label ( RW): My VM 3

power-state ( RO): running

This is the OpenVPN config I use for connecting an OpenWRT router to a pfsense, providing interconnectivity between both LANs.

nobind persist-key cipher AES-256-CBC dev tun ifconfig 10.22.51.2 10.22.51.1 keepalive 10 60 port 1194 proto udp4 compress remote myid.myfritz.net resolv-retry infinite route 192.168.100.0 255.255.255.0 verb 5 auth SHA512 <secret> # # 2048 bit OpenVPN static key # -----BEGIN OpenVPN Static key V1----- 97aae54ce3e22128c0efba9043a6ba07 03dc5a68399a7e7f65ab6d7cdc390729 a1f72e665fe7cf300edccb1555df56ff 3d2386942c7b78cf1676c5734834ea18 2c2ba33523e3278a84efe168dd160fd4 3c0205a0335765b80881cfb915e9b3de 097a63ee5321a31540c51a628ab95d0e 4f40657351125526120a1a83ec8af043 3ddbb859a6c8e2d36797ba5a983dd223 5ecea38941b8af992492887e6d361ccc a41f1a3993f2c24011b2a3026b71c82d 12d301cb346de19dcaa550886b5dd0c0 9b4d6bd0ca7415a42e4ffa10fe39659e e9ad0ff1edcfa2d62c3e3db2834f0da5 fe8e4c332325a195c537551a6f1a0ff5 c5bd5d7b038c7a9df9c8d28cb33ef4b0 -----END OpenVPN Static key V1----- </secret>

where:

10.22.51.0/24 is the VPN transfer net (IPv4 tunnel network in the pfsense), hence 10.22.51.2 is the IP address of the OpenWRT client and 10.22.51.1 is the IP address of the pfsense (i.e. OpenVPN server)1194 is the port to connect to (I use only UDP VPNs for most setups)myid.myfritz.net is the domain name of the pfsense, which is (in this case) running behind a FritzBox router using a myfritz dynamic DNS server<secret> is the static key that is configured in the pfsense.See pfsense-OpenWRT-OpenVPN-Config.pdf for the entire pfsense config.

The most important aspects are to:

Disable Compression, retain compression packet framing [compress] (since we don’t have a comp directive in the client config)Peer to Peer ( Shared Key )1194 UDP if you’re using that portInstead of using a directive like

secret static.key

in your OpenVPN config, you can also use an inline key:

<secret> // Copy & paste OpenVPN static key here !! </secret>

A full example OpenVPN config looks like this:

nobind persist-key cipher AES-256-CBC dev tun ifconfig 10.92.11.2 10.92.11.1 keepalive 10 60 port 1194 proto udp4 remote mydomain.net resolv-retry infinite route 192.168.9.0 255.255.255.0 secret /dev/urandom verb 5 auth SHA512 <secret> # # 2048 bit OpenVPN static key # # # 2048 bit OpenVPN static key # -----BEGIN OpenVPN Static key V1----- 97aae54ce3e22128c0efba9043a6ba07 03dc5a68399a7e7f65ab6d7cdc390729 a1f72e665fe7cf300edccb1555df56ff 3d2386942c7b78cf1676c5734834ea18 2c2ba33523e3278a84efe168dd160fd4 3c0205a0335765b80881cfb915e9b3de 097a63ee5321a31540c51a628ab95d0e 4f40657351125526120a1a83ec8af043 3ddbb859a6c8e2d36797ba5a983dd223 5ecea38941b8af992492887e6d361ccc a41f1a3993f2c24011b2a3026b71c82d 12d301cb346de19dcaa550886b5dd0c0 9b4d6bd0ca7415a42e4ffa10fe39659e e9ad0ff1edcfa2d62c3e3db2834f0da5 fe8e4c332325a195c537551a6f1a0ff5 c5bd5d7b038c7a9df9c8d28cb33ef4b0 -----END OpenVPN Static key V1----- </secret>

Generate an OpenVPN static key and save it to static.key:

openvpn --genkey --secret static.key

The key looks like this:

# # 2048 bit OpenVPN static key # -----BEGIN OpenVPN Static key V1----- 97aae54ce3e22128c0efba9043a6ba07 03dc5a68399a7e7f65ab6d7cdc390729 a1f72e665fe7cf300edccb1555df56ff 3d2386942c7b78cf1676c5734834ea18 2c2ba33523e3278a84efe168dd160fd4 3c0205a0335765b80881cfb915e9b3de 097a63ee5321a31540c51a628ab95d0e 4f40657351125526120a1a83ec8af043 3ddbb859a6c8e2d36797ba5a983dd223 5ecea38941b8af992492887e6d361ccc a41f1a3993f2c24011b2a3026b71c82d 12d301cb346de19dcaa550886b5dd0c0 9b4d6bd0ca7415a42e4ffa10fe39659e e9ad0ff1edcfa2d62c3e3db2834f0da5 fe8e4c332325a195c537551a6f1a0ff5 c5bd5d7b038c7a9df9c8d28cb33ef4b0 -----END OpenVPN Static key V1-----

I have configured my Synology NAS to use NFS4 and NFSv4.1. For certain fixed IP addresses, I have allowed passwordless mounting of specific NFS shares:

This is my mount line in /etc/fstab

10.1.2.3:/volume1/myfolder /mnt/myfolder nfs async,soft,auto 0 0

where:

10.1.2.3 is the IP address of the NAS in the VPNmyfolder is the name of the Synology shareThis configuraton has been verified to work even when connecting via an OpenVPN connection that connects to a DSL client with an IP address that changes every 24 hours, leading to a disconnect of about 30 seconds. However, you should still test it in your specific configuration.

Log into your router using ssh root@ip-addr and run these commands:

opkg update opkg install openvpn-openssl luci-app-openvpn /etc/init.d/uhttpd restart echo "Done"

Done !